Background

The availability and prevalence of high-quality gratis email providers has made people take for granted the true cost of using these services. These companies are hoping they can collect enough valuable information from reading your emails that they can make up the difference of providing you the service at no cost. A necessary step to regain control over your email and maintain your privacy is to host your own private email server using free (as in freedom) software.

There are several components involved in a email server and you have choices to make between alternative free software projects. At a high level, the the primary components are the MTA, MDA, and MUA.

Mail Transfer Agent (MTA)

A Mail Transer Agent (MTA) uses SMTP to send/receive emails. I recommend Postfix unless special circumstances require the features of the alternative MTAs.

- Sendmail – perhaps the most well known MTA but notoriously hard to configure and a poor history of security breaches

- qmail – ideal for size constrained environment but doesn’t support modern email standards

- exim – very flexible, powerful, and general purpose, but not as fast as or secure as Postfix

- Postfix – modern, secure, and efficient, but has a restricted feature set by design

Mail Delivery Agent (MDA)

A Mail Delivery Agent (MDA) receives messages from a MTA and delivers the mail to a local mailbox typically using either Post Office Protocol (POP) or Internet Message Access Protocol (IMAP). I recommend Dovecot over the alternatives.

- Cyrus – difficult to configure and non-standard format

- Courier – consumes more server resources, slower, and less secure than dovecot

- Dovecot – modern, fast, and secure

Mail User Agent (MUA)

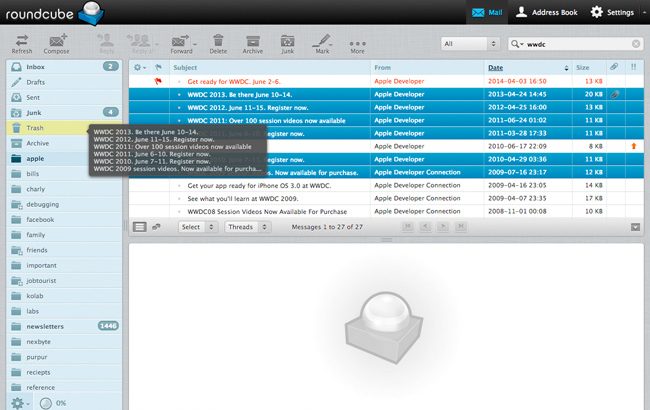

A Mail User Agent (MUA) is a program used by the end user to read and process mail. A MUA can be a local email client such as Thunderbird or it can be a remote webmail server that provides access to clients via a web browser. If you want to host a webmail server, I recommend Roundcube unless you desire the additional features offered by Horde such as mobile email access.

- Squirrelmail – very simple but doesn’t have spell check or support HTML composition

- Horde – has more complete features such as mobile email access, advanced productivity features including event reminders, news feed, notes, and a calendar

- Roundcube – very user friendly, modern, and pretty

Setup PHP and MySQL

Prerequisites

On Debian 9 GNU/Linux, install the following PHP and MySQL packages:

sudo apt-get install -y php5-fpm php5-imap php5-mysql php5-mcrypt php5-intl mysql-server mysql-client

Configuration

Set the timezone in the PHP configuration file:

sudo sed -i -e 's/^;date\.timezone =$/date.timezone = America\/Chicago/' /etc/php5/fpm/php.ini

Create a new user and group:

sudo groupadd -g 1001 g1001 sudo useradd --no-create-home -g 1001 -u 1001 u1001

Create PHP fpm socket directory:

sudo mkdir -p /etc/php5/fpm/socks

Remove the default FastCGI Process Manager (FPM) pool:

sudo rm -f /etc/php5/fpm/pool.d/www.conf

Create a new fpm pool configuration file /etc/php5/fpm/pool.d/ssl_example.com.conf and copy into it the following content.

[ssl_example.com] listen = /etc/php5/fpm/socks/ssl_example.com.sock user = u1001 group = g1001 listen.owner = www-data listen.group = www-data listen.mode = 0666 pm = dynamic pm.max_children = 50 pm.start_servers = 1 pm.min_spare_servers = 1 pm.max_spare_servers = 5 pm.max_requests = 0 php_admin_value[open_basedir]=/ php_admin_value[session.save_path]=/home/clients_ssl/example.com/tmp php_admin_value[upload_tmp_dir]=/home/clients_ssl/example.com/tmp php_admin_value[disable_functions]=dl

Ensure that the file has the proper permissions:

sudo chmod 644 /etc/php5/fpm/pool.d/ssl_example.com.conf

Start the PHP FPM service:

sudo systemctl start php5-fpm.service

Setup Postfix

Prerequisites

On Debian 9 GNU/Linux, install the following Postfix packages:

sudo apt-get install -y posftfixadmin libsasl2-modules libsasl2-modules-sql postfix postfix-mysql

Configuration

Create the postfix database.

sudo mysql -u root -p dbpassword1 -e "CREATE DATABASE postfix; GRANT ALL PRIVILEGES ON postfix.* TO 'postfix_admin'@'%' IDENTIFIED BY 'dbpassword1'; GRANT SELECT ON postfix.* TO 'postfix'@'%' IDENTIFIED BY 'dbpassword2'; FLUSH PRIVILEGES;"

Create /etc/postfix/main.cf with the following contents:

myorigin = /etc/mailname

smtpd_banner = $myhostname ESMTP $mail_name (Debian/GNU)

biff = no

append_dot_mydomain = no

readme_directory = no

smtpd_tls_cert_file=/etc/ssl/certs/ssl-cert-snakeoil.pem

smtpd_tls_key_file=/etc/ssl/private/ssl-cert-snakeoil.key

smtpd_use_tls=yes

smtp_tls_CAfile = /etc/ssl/certs/ca-certificates.crt

smtpd_tls_session_cache_database = btree:${data_directory}/smtpd_scache

smtp_tls_session_cache_database = btree:${data_directory}/smtp_scache

smtpd_relay_restrictions = permit_mynetworks permit_sasl_authenticated defer_unauth_destination

myhostname = myhost.example.com

alias_maps = hash:/etc/aliases

alias_database = hash:/etc/aliases

mydestination = localhost

relayhost = [smtp.myisp.net]:587

mynetworks = 127.0.0.0/8 192.168.253.0/24 [::ffff:127.0.0.0]/104 [::1]/128

mailbox_command = procmail -a "$EXTENSION"

mailbox_size_limit = 0

recipient_delimiter = +

inet_interfaces = all

virtual_uid_maps = static:3000

virtual_gid_maps = static:3000

virtual_mailbox_base = /home/vmail

virtual_mailbox_domains = mysql:/etc/postfix/mysql_virtual_mailbox_domains.cf

virtual_mailbox_maps = mysql:/etc/postfix/mysql_virtual_mailbox_maps.cf

virtual_alias_maps = mysql:/etc/postfix/mysql_virtual_alias_maps.cf

relay_domains = mysql:/etc/postfix/mysql_relay_domains.cf

virtual_transport = lmtp:unix:private/dovecot-lmtp

smtpd_recipient_restrictions =

permit_mynetworks,

permit_sasl_authenticated,

reject_non_fqdn_hostname,

reject_non_fqdn_sender,

reject_non_fqdn_recipient,

reject_unauth_destination,

check_policy_service unix:private/policyd-spf,

reject_unauth_pipelining,

reject_invalid_hostname

smtpd_sasl_auth_enable = yes

smtpd_sasl_security_options = noanonymous

smtp_sasl_auth_enable = yes

smtp_sasl_password_maps = hash:/etc/postfix/sasl_passwd

smtp_sasl_security_options =

smtpd_sasl_type = dovecot

smtpd_sasl_path = private/auth

Create /etc/postfix/mysql_virtual_mailbox_domains.cf with the following contents:

hosts = 127.0.0.1 user = postfix password = dbpassword dbname = postfix query = SELECT domain FROM domain WHERE domain='%s' and backupmx = 0 and active = 1

Create /etc/postfix/mysql_virtual_mailbox_maps.cf with the following contents:

hosts = 127.0.0.1 user = postfix password = dbpassword dbname = postfix query = SELECT maildir FROM mailbox WHERE username='%s' AND active = 1

Create /etc/postfix/mysql_virtual_alias_maps.cf with the following contents:

hosts = 127.0.0.1 user = postfix password = dbpassword dbname = postfix query = SELECT goto FROM alias WHERE address='%s' AND active = 1

Create /etc/postfix/mysql_relay_domains.cf with the following contents:

hosts = 127.0.0.1 user = postfix password = dbpassword dbname = postfix query = SELECT domain FROM domain WHERE domain='%s' and backupmx = 1

Create /etc/postfix/master.cf with the following contents:

smtp inet n - y - - smtpd -v

submission inet n - y - - smtpd

-o syslog_name=postfix/submission

-o smtpd_tls_security_level=encrypt

-o smtpd_sasl_auth_enable=yes

-o smtpd_client_restrictions=permit_sasl_authenticated,reject

-o milter_macro_daemon_name=ORIGINATING

smtps inet n - y - - smtpd

-o syslog_name=postfix/smtps

-o smtpd_tls_wrappermode=yes

-o smtpd_sasl_auth_enable=yes

-o smtpd_client_restrictions=permit_sasl_authenticated,reject

-o milter_macro_daemon_name=ORIGINATING

pickup unix n - y 60 1 pickup

cleanup unix n - y - 0 cleanup

qmgr unix n - n 300 1 qmgr

tlsmgr unix - - y 1000? 1 tlsmgr

rewrite unix - - y - - trivial-rewrite

bounce unix - - y - 0 bounce

defer unix - - y - 0 bounce

trace unix - - y - 0 bounce

verify unix - - y - 1 verify

flush unix n - y 1000? 0 flush

proxymap unix - - n - - proxymap

proxywrite unix - - n - 1 proxymap

smtp unix - - y - - smtp

relay unix - - y - - smtp

showq unix n - y - - showq

error unix - - y - - error

retry unix - - y - - error

discard unix - - y - - discard

local unix - n n - - local

virtual unix - n n - - virtual

lmtp unix - - y - - lmtp

anvil unix - - y - 1 anvil

scache unix - - y - 1 scache

maildrop unix - n n - - pipe

flags=DRhu user=vmail argv=/usr/bin/maildrop -d ${recipient}

uucp unix - n n - - pipe

flags=Fqhu user=uucp argv=uux -r -n -z -a$sender - $nexthop!rmail ($recipient)

ifmail unix - n n - - pipe

flags=F user=ftn argv=/usr/lib/ifmail/ifmail -r $nexthop ($recipient)

bsmtp unix - n n - - pipe

flags=Fq. user=bsmtp argv=/usr/lib/bsmtp/bsmtp -t$nexthop -f$sender $recipient

scalemail-backend unix - n n - 2 pipe

flags=R user=scalemail argv=/usr/lib/scalemail/bin/scalemail-store ${nexthop} ${user} ${extension}

mailman unix - n n - - pipe

flags=FR user=list argv=/usr/lib/mailman/bin/postfix-to-mailman.py

${nexthop} ${user}

policyd-spf unix - n n - 0 spawn

user=policyd-spf argv=/usr/bin/policyd-spf

Create vmail user and group:

sudo groupadd -g 3000 vmail sudo useradd -d /home/vmail -m -u 3000 -g 3000 vmail sudo adduser postfix sasl

Start the postfix service:

sudo systemctl start postfix.service

Setup Dovecot

Prerequisites

On Debian 9 GNU/Linux, install the following Dovecot packages:

sudo apt-get install -y dovecot-core dovecot-mysql

Configuration

Configure /etc/dovecot/dovecot-sql.conf.ext:

driver = mysql connect = host=127.0.0.1 dbname=postfix user=root password=dbpassword default_pass_scheme = MD5 user_query = \ SELECT '/home/vmail/%d/%n' as home, 3000 AS uid, 3000 AS gid \ FROM mailbox WHERE username = '%u' password_query = \ SELECT password \ FROM mailbox WHERE username = '%u'

Configure /etc/dovecot/conf.d/10-auth.conf:

auth_mechanisms = plain login !include auth-sql.conf.ext

Configure /etc/dovecot/conf.d/10-mail.conf:

mail_location = maildir:/home/vmail/%d/%n:INDEX=/home/vmail/%d/%n/indexes

namespace inbox {

inbox = yes

}

Configure /etc/dovecot/conf.d/10-ssl.conf:

ssl = no ssl_cert = </etc/dovecot/dovecot.pem ssl_key = </etc/dovecot/private/dovecot.pem

Configure /etc/dovecot/conf.d/20-imap.conf:

protocol imap {

mail_max_userip_connections = 10

}

Configure /etc/dovecot/conf.d/auth-sql.conf.ext:

passdb {

driver = sql

args = /etc/dovecot/dovecot-sql.conf.ext

}

userdb {

driver = sql

args = /etc/dovecot/dovecot-sql.conf.ext

}

Configure /etc/dovecot/conf.d/10-master.conf:

service imap-login {

inet_listener imap {

}

inet_listener imaps {

}

}

service pop3-login {

inet_listener pop3 {

}

inet_listener pop3s {

}

}

service lmtp {

unix_listener /var/spool/postfix/private/dovecot-lmtp {

mode = 0600

user = postfix

group = postfix

}

}

service imap {

}

service pop3 {

}

service auth {

unix_listener auth-userdb {

}

unix_listener /var/spool/postfix/private/auth {

mode = 0666

}

}

service auth-worker {

}

service dict {

unix_listener dict {

}

}

Start the dovecot service:

sudo systemctl start dovecot.service

Setup Roundcube

Prerequisites

On Debian 9 GNU/Linux, install the following Roundcube packages:

sudo apt-get install -y roundcube roundcube-mysql

Configuration

Create and populate roundcube database:

sudo mysql -u root -p dbpassword1 -e "CREATE DATABASE roundcube; GRANT ALL PRIVILEGES ON roundcube.* TO roundcube@localhost IDENTIFIED BY 'rcpassword'; FLUSH PRIVILEGES;" sudo mysql -u roundcube -p rcpassword < /usr/share/roundcube/SQL/mysql.initial.sql

Enable roundcube and reload apache2 service:

sudo a2enconf roundcube sudo systemctl reload apache2

Proxy MTA

One challenge you may face if hosting the server at your home is your residential ISP may block incoming connections to TCP port 25 which is required for receiving incoming email on your MTA. One way around this problem is to set your MX record to point at a virtual server that is running a proxy MTA. Then setup a VPN tunnel between your remote virtual server and your local MTA and have the proxy MTA forward all messages to your local MTA.

Conclusion

Hosting your own email server is only the first step to regaining your email privacy. A major challenge is that very few people have the skills, time, or motivation to host their own email servers. As a result, most people you send emails to will be using email providers that spy on their users. Thus, your communication with those people will still be subject to surveillance. Lastly, there is always the issue of plaintext emails being intercepted by a third party. To properly defend against this, you need to share GPG encryption keys and encrypt the contents of the email.